How to effectively A/B test in a way that drives long-lasting results

So, your company wants to increase revenue and adoption by making some marketing site tweaks. They want more conversions, more clicks, more shares, and more users. What do they tell you to do first? Well, A/B test! Compare two versions of a page, define a key goal (ex. clicks), and see if you get more clicks. But, does this actually work? Is it really the approach you should take? Let’s look at the data.

By nature, an A/B test is an experiment that assesses multiple (often 2) versions of a feature or page relative to a defined metric.

This article focuses on superficial A/B tests — the testing of cosmetic changes that distract teams from delivering meaningful customer value.

A/B Testing Band-aid

For apps with millions of users, small cosmetic changes to your app, like color, layout, and language could net you some noticeable increases in your key metrics, like more clicks and user engagement. The real question is this: for companies with smaller user bases, should you try to focus on different “Sign Up!” button colors or focus on actually making your product better?

A/B testing for many companies becomes a band-aid for poor value propositions. If your content is not being shared, maybe your content is actually not share-worthy, regardless of how amazing you make your “Share Now!” button.

Moreover, maybe your goal should not be getting a prospect to click on a button. Maybe your primary goal should be to build trust, provide a sandbox demo, or empower the prospect to make a decision.

Survey Data

AppSumo estimated that only 25% of A/B tests actually produced significant results. The question is why? Well, let’s define significant results. For many of these tests, the primary metric orgs were trying to move was conversation rate. So, if the rate wasn’t increased, then the test failed.

Less than 25% of A/B tests produced significant positive results — AppSumo

However, we could look at it a different way. If changing your header slogan, hero image, or CTA didn’t move metrics, then maybe that is indicative of a larger issue. A failed test should be an indicator that:

- Your visitors are not ready to convert

- Your visitors are seeking something else besides signing up

- Your core product is fundamentally unattractive

- You need to drive more qualified leads to your product

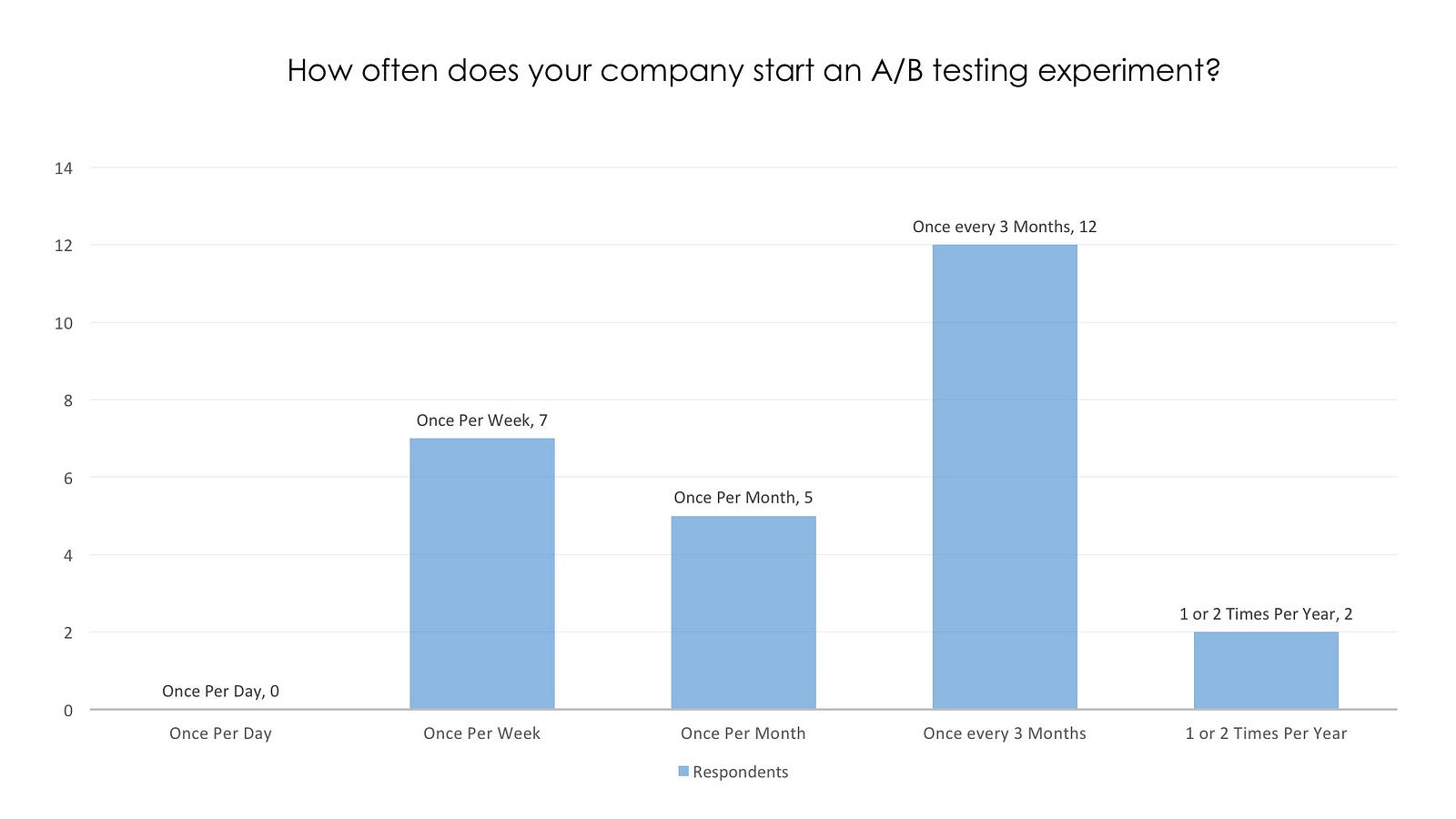

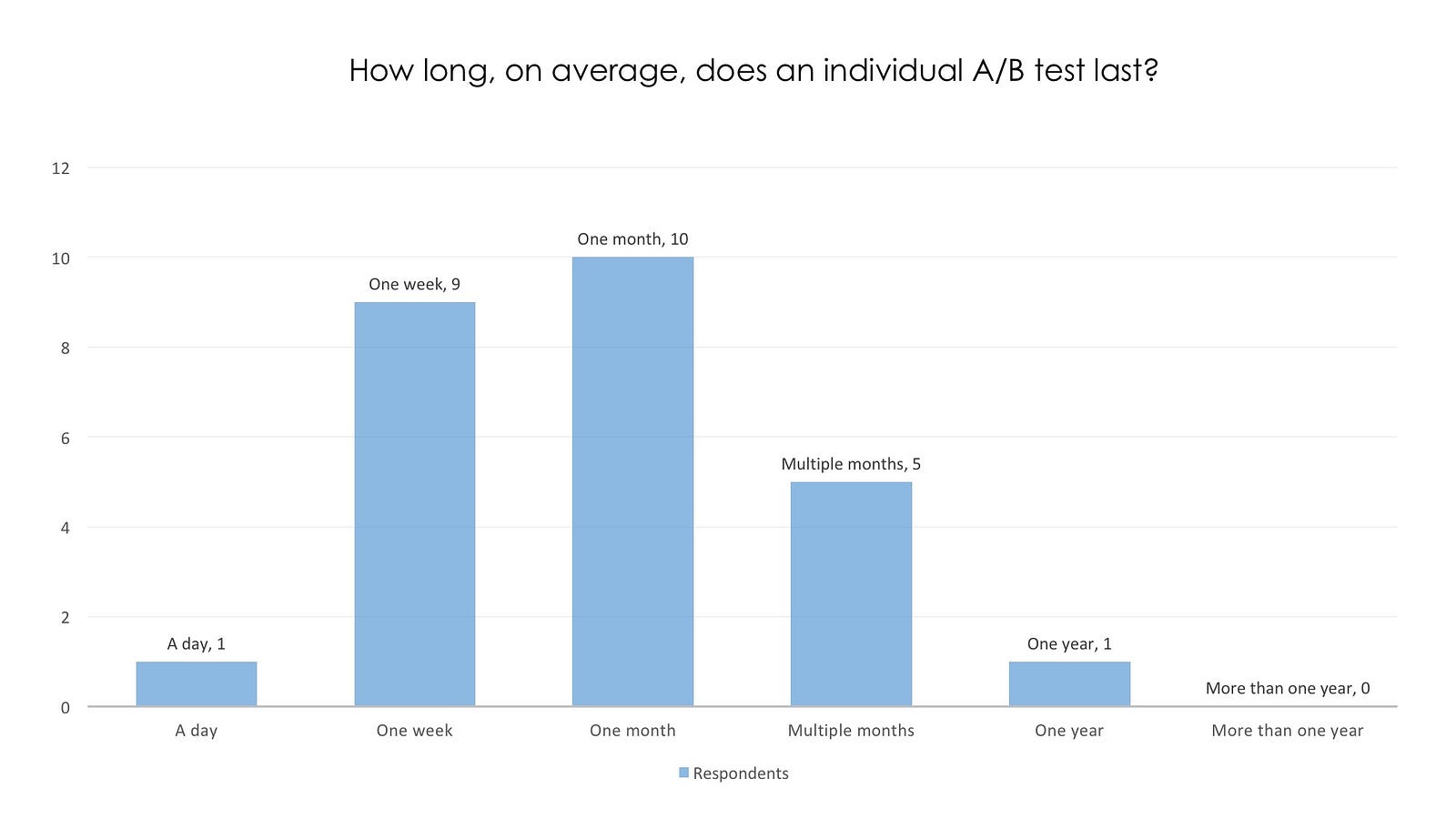

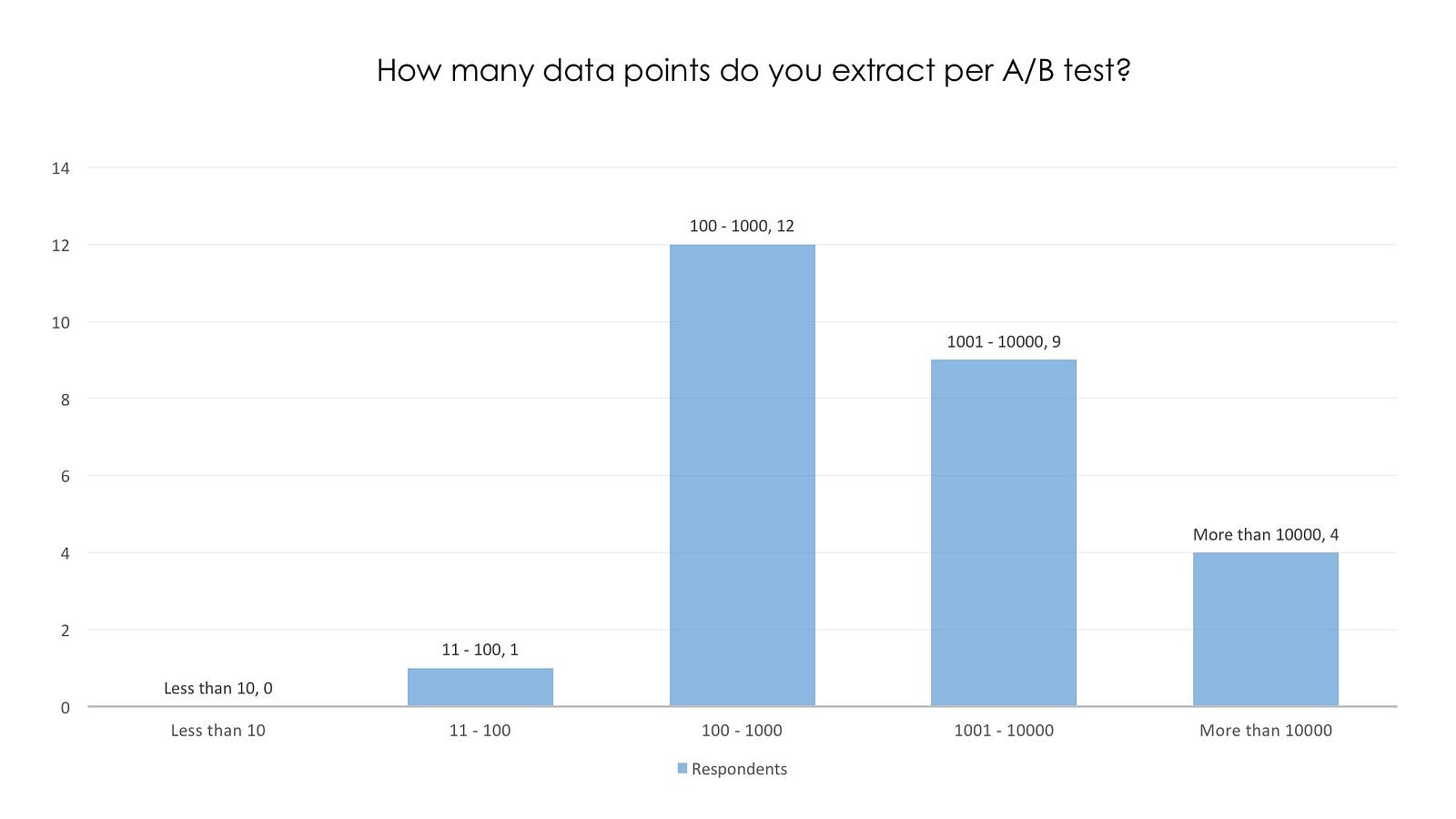

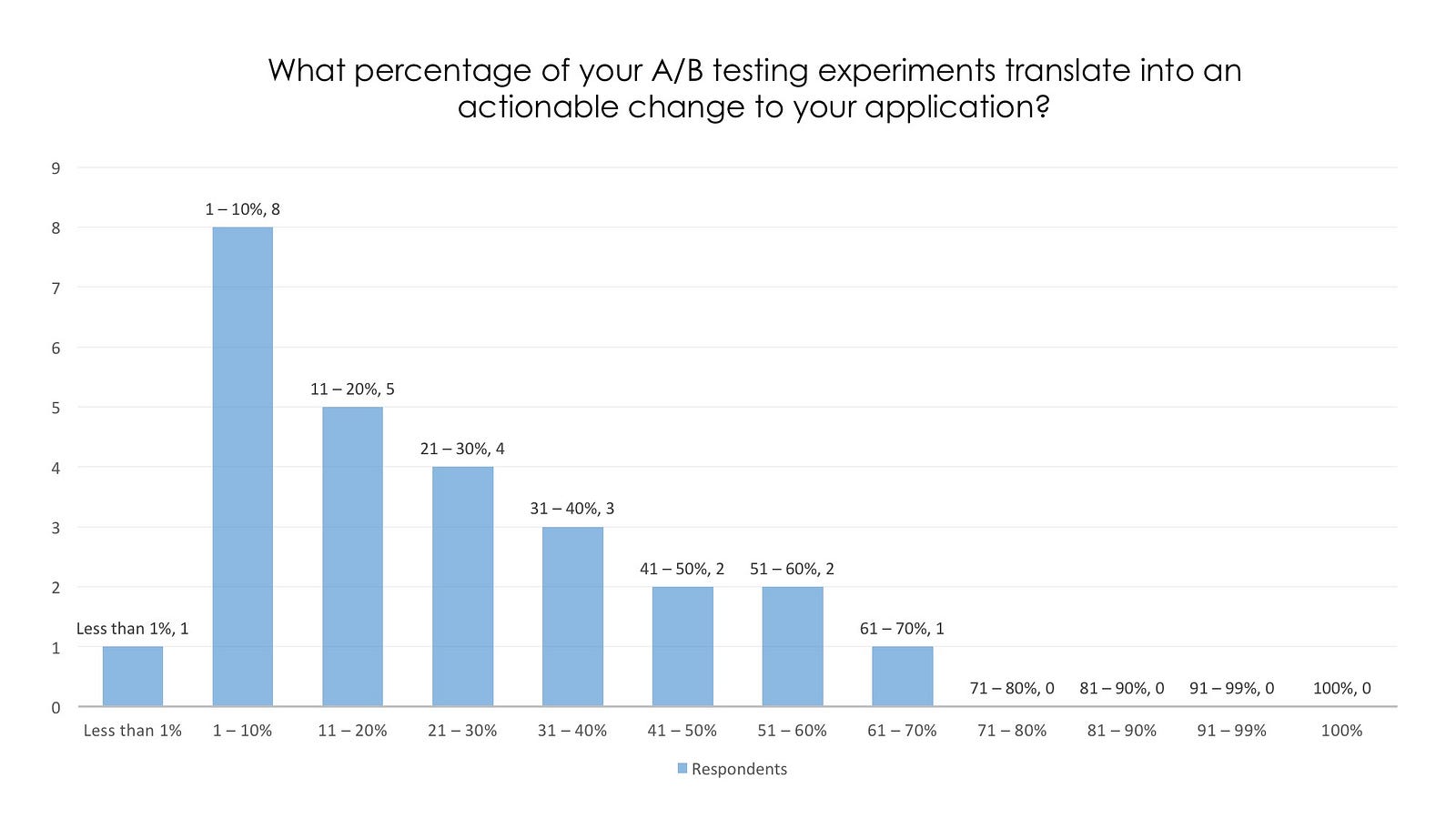

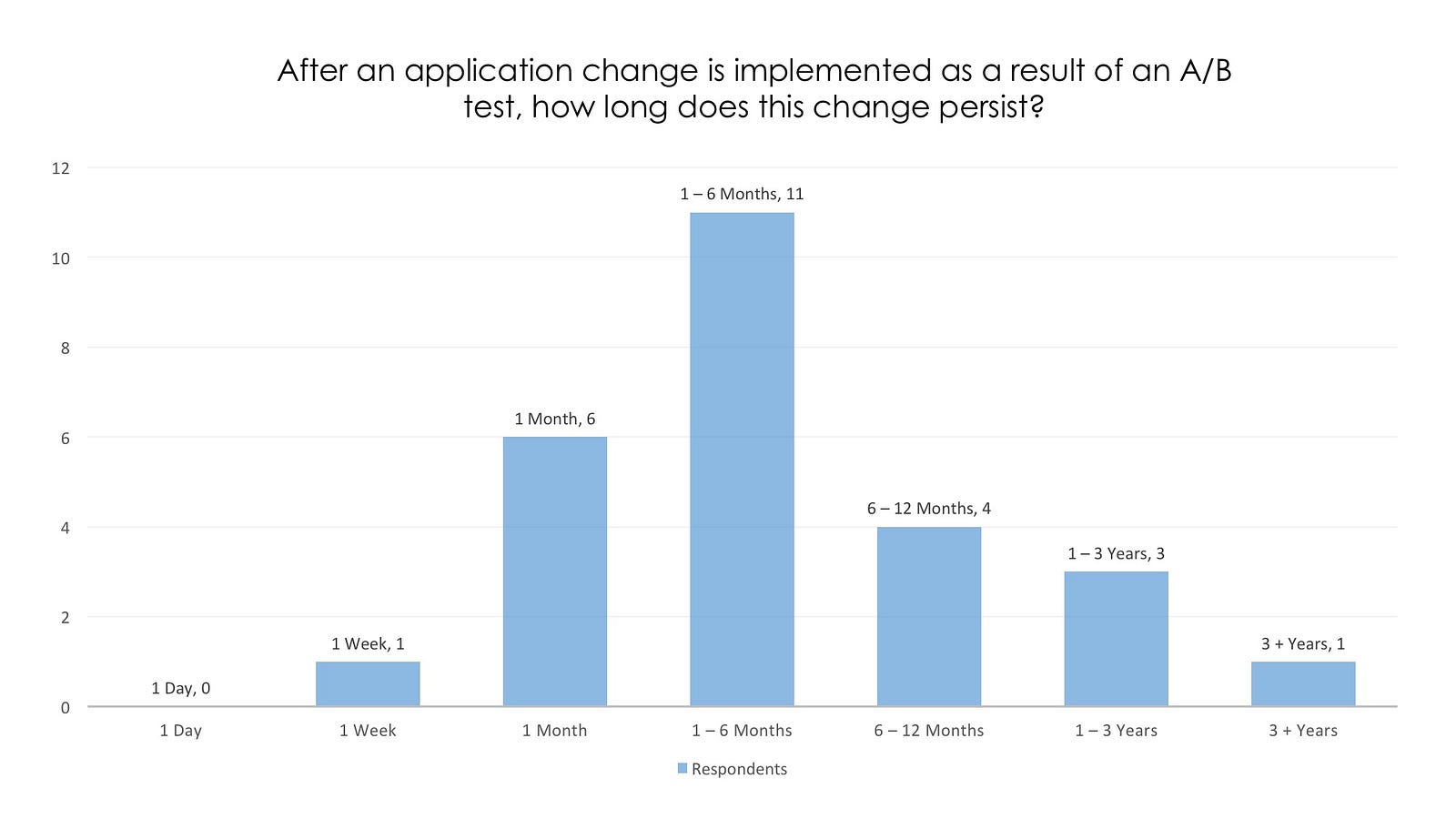

This next dataset is from a qualitative and quantitative A/B testing survey of 26 A/B testing practitioners conducted from May 1 to May 30 in 2016 (Northwestern, IDS — Justin Baker, 2016). While this is not the end-all be-all of surveys, it could still give us some meaningful insights.

Key Points

- 45 percent of respondents said that their companies start a new AB test once every 3 months, with 40 percent starting one per week or month;

- 60 percent of respondents said that their AB tests last between 1 week and 1 month;

- 38 percent of respondents said that less than 10 percent of their AB testing experiments actually translate into action; and

- 45 percent of respondents said that application changes from AB testing persist for 1 to 6 months.

Only 10% of A/B testing experiments resulted in actionable change — formally releasing a new version of a page or feature.

Interview Data

To supplement the quantitative research, A/B testers (from 2 to 6 years of A/B testing experience) were asked open-ended questions regarding the efficacy of A/B testing. Here are some of the key take-aways:

50% of teams could not make decisions from A/B testing experiments due to inconclusive or poorly measured data

- 10 of 12 interviewees noted that a major drawback of A/B testing was that 90 percent or more of experiments ‘failed’;

- 6 of 12 interviewees noted that it was very difficult to make product decisions based on A/B testing results since most results were inconclusive or clearly failed. This means that the status quo was maintained roughly 90 percent of the time; and

- 10 of 12 interviewees noted that the primary benefit of A/B testing was to ‘increase revenue’.

Making A/B Testing Useful

Overall, what do these results tell us?

Companies may be running A/B tests too frequently for too little time, contributing to a high failure rate that makes A/B test results less valuable and meaningful.

Here are some tips to help make A/B testing useful for your application.

- Don’t get distracted — Changing colors, call-to-action text, and layout may have a marginal impact on your key performance metrics. However, these results seem to be very short-lived. Sustainable growth does not result from changing a button from red to blue, it comes from building a product that people want to use.

- Don’t put lipstick on a pig — The better version of two pigs is still a pig. If you are trying to sell a pig, then you are doing great. If not, then focus on creating better user experiences and a better value proposition.

- Use actual statistics — Do not rely on simple 1 on 1 comparison metrics to dictate what works and does not work. “Version A yields a 20 percent conversion rate and Version B yields a 22 percent conversion rate, therefore we should switch to Version B!” Please do not do this. Use actual confidence intervals, z-scores, and statistically significant data.

- The longer, the better — The longer you run your test, the better your data will account for fluctuations and extraneous variables. Do not run a test over memorial day weekend using a red/white/blue theme and then switch to that theme for the rest of the year.

- Failure is okay, but failure is expensive — If you keep releasing versions of your application that people hate, then what impact is that having on your metrics? If most experiments fail, then are you doing more harm then good? How much time are you spending designing and implementing A/B testing experiments? Failing and experimenting are natural byproducts of building a company. If something is not working, maybe it is not because your button needs to be a brighter color, maybe it is because you need to make your feature better.

TLDR

Effective A/B tests are about driving long-lasting, positive value for your customers. If you get stuck in the loop of menial changes, then you’re basically churning quicksand instead of moving your product forward.

Effective A/B tests are about problem solving — driving long-lasting, positive value for your customers.

Test meaningful features, use real statistics, get real feedback, and run tests for longer periods of time. Deliver true value to your users instead of playing with color hues and clever tag lines. I’m not trying to downplay the importance of ensuring that your layout is optimized, your copy is powerful, and that your information hierarchy is fluid. I am trying to get teams to think about improving user experiences by adding value and solving problems, not by throwing lipstick on a pig or trying to wordsmith a new headline.